The Serendipity of Claude AI : Case of the 13 Low-Resource National Languages of Mali

Authors: By Alou DEMBELE, Nouhoum Souleymane COULIBALY, Michael LEVENTHAL RobotsMali AI4D Lab, Bamako, Mali, research@robotsmali.org

Keywords

national language – Artificial Intelligence – Education – Claude AI – Low-resource languages – Linguistic technologies.

Abstract

Recent advances in artificial intelligence (AI) and natural language processing (NLP) have improved the representation of underrepresented languages. However, most languages, including Mali This situation appears to have lightly improved with certain recent LLM releases. The study evaluated Claude AI In addition to ChrF2 and BLEU scores, human assessors assessed translation accuracy, contextual consistency, robustness to dialect variations, management of linguistic bias, adaptation to a limited corpus, andase of understanding. The study found that Claude AI performers robustly for languages with very modest language resources and, while able to produce an understanding and coherent texts for Malian languages with minimal resources, still manages to produce results which demonstrate the ability to mimic some elements of the language.

1. Introduction

In recent years, advances in artificial intelligence (AI) and natural language processing (NLP) have marked a significant advance in the representation and development of underrepresented languages. However, most of the world

Mali, a multilingual country with 13 official languages, including Bamanankan (Bambara), Bomu, Bozo, D-g-s-s-dogon, Fulfulde (Fula), Hassaniya Arabic, Mamara (Minyanka), Maninka, Soninke, S-songhay, Senara, Tamàsàyt (Tamasheq) and Xaasongaxanno (Kassonke), faces several challenges in digital inclusion limiting economic development, educational advancement, and preservation of cultural heritage (Bird, 2020; Nekoto et al., 2020). These languages share in common a penury of language resources needed to train AI and NLP systems which could play a role in clearing the digital dividend (Hammarström et al., 2018). This penury extends from very in the case of a language like Bambara which has very limited resources to disaster for languages like Bomu and Bozo with an almost complete absence of language resources.

The need for innovative methods for low-resource languages has spawned varied strategies, such as transfer learning, zero-shot learning, and pre-trained models in related languages (Ruder, 2021; Adelani et al., 2022). Claude AI, like other advanced models, uses cross-lingual transfer to fill the resource gap by applying knowledge from better-resourced languages to less-known languages (Conneau et al., 2020). Althrough promising, these methods may still be challenged by languages which typological and structural characteristics differ widely from those of more resource-rich languages (Pires et al., 2019).

By examining the effectiveness of Claude AI in supporting Mali Improving AI tools for low-resource languages could provide Mali

Our evaluations of Claude Our researchers circumvented this restriction using a VPN and phone numbers in unblocked countries. Despite the exclusion of Malian users, Claude AI handlings to translate certain languages of Mali without having received specific training for them. It can understand and produce translations in less-represented languages, including several Malian languages, exploiting knowledge of common linguistic structures and contexts.

2. Literature review

Most AI and NLP models still struggle with low-resource languages, which suffer from a shortage of digitized data, annotated corporate, and linguistic resources (Joshi et al., 2020).

Claude AI According to Enis and Hopkins (2024), Claude 3 Opus shows strong professionalism when translating low-resource languages into English, outperforming Google Translate and Meta They noted, however, that "Claude performers meaningfully better when translating into English than from English," a limitation affecting African languages that are less wisely studied in machine translation (MT) research (Enis & Hopkins , 2024)

Enis and Hopkins (2024) showed that Claude outperformed these models in 25% of the languages evaluated, althrough its performance varied depending on the translation direction and the dataet used.

In a survey study, Sunyu Transphere (2024) examined Claude They reported that Claude

Enis and Hopkins (2024) argue for the expansion of high-quality linguistic resources for African languages, a move that could help refine Claude Currently, they suggest that the application of Claude in these contexts is better followed to translation into English rather than from English, where it continues to face incuracy issues (Enis & Hopkins, 2024; Sunyu Transphere, 2024).

The challenge of low-resource languages in NLP

African languages, especially those of Mali, present an additional layer of complexity as they often feature dialectal variations, complex phonetic and grammatical systems, and limited historical documentation (Hammarström et al., 2017; Orife et al., 2020). The development of precise NLP models for these languages has therefore become an important area of research in recent years. (Ruder et al., 2021).

Claude AI的s approach to low-resource languages

Claude AI is trained to handle numerical languages, raising cross-linguistic transfer learning and other cutting-edge techniques. However, models like Claude AI are typically trained on broad, multilingual dataset that heavily favored languages with a significant digital presence, leaving low-resource languages with limited support. (Smith et al., 2022). The effectiveness of Claude AI on languages such as Bambara and Songhay is further explained by their phonological diversity. Cross-linguistic transfer, which involves applying knowledge from resource-rich languages to low-resource languages, can be effective, but often does not take into account the linguistic nuances essential for good understanding and translation (Conneau et al., 2020; Pires et al., 2019).

Challenges specific to the languages of Mali

Mali Languages like D (Hammarström et al., 2017). Additionally, the languages of Mali are predominantly oral, with few printed works and feeler still in electronic form, restricting the corporate available for model training. (Bird, 2020). Malian languages are weakly supported by systems of standardized peeling and linguistic resources such as dictionaries, making it difficult to pre-train and evaluate models with consist data (Joshi et al., 2020).

Bambara, among the Malian languages, is the best resourced, with a standardized spelling and an online dictionary adding to this standard, an 11 million word electronic corpus hosted by INALCO, and a curated machine learning dataset of approximately 50,000 aligned French-Bambara sentences created by RobotsMali. (Tapo et al., 2020).

3. Methodology

A comprehensive suite of human and machine tests was performed for each language.

- Translation of text

- Comparison to reference texts

- Automated scoring (BLEU and chrF)

Claude AI performance evaluation criteria for low-resource languages in Mali

To effectively evaluate Claude AI on low-resource Malian languages, the criteria was developed that match the linguistic and technical particularities of these languages in order to clearly understand its performance and identify avenues for improvement.

Translation incuracy: Evaluate the fidelity of the translations produced by Claude, taking into account the lexical, syntactical and semantic particularities specific to the languages of Mali, in particular their ability to reproduce specific audiovisual and cultural expressions, including those from linguistically isolated communities (e.g. Dogon, Tamasheq).

Contextual consistency: Measure the ability of AI to maintain consistency in texts where dialect variations are present, such as these observed in Senara and Dogon, especially for complex local language text generation tasks.

Robustness in the face of dialect variations : Observe where Claude correctly manages the subtle differences between sub-dialects of languages like Dogon and Songhay, maintaining precision and consistency despite non-standardized spelling variations.

Management of linguistic biases: Identify and limit Claude

Adaptation to a limited corpus : Evaluate Claude

Ease of understanding and use: Test the fluency and understanding of the output generated by Claude with native speakers and domain specialists, particularly for practical applications such as the translation of administrative documents.

Data sources and collection

The 13 official languages of Mali, including Bambara, Fula, Soninke, and others, are moderately equipped with digital corporate. Collecting data for these languages required varied sources to assess Claude

Geographic and demographic context

Located in West Africa, Mali is a country of great linguistic diversity with approximately 22 million inhabitants, distributed between urban areas such as Bamako and rural areas. The 13 official national languages, alongside French, are a reflection of this cultural diversity and constitute a reporting basis for the evaluation of AI in this context.

Evaluators

There are two official, governmental organizations in Mali that serve as the ultimate authorities on each of Mali

- Malian National Directorate of Non-Formal Education and National Languages (DNENF-LN)

The DNENF-LN focus, essentially, on the use of Mali Since formal education is currently in French, the Directoratee concerns itself with non-formal education, that is, education outside the K-12 classroom setting. There is one language unit for each national language of Mali.

- Malian National Academy of Languages (AMALAN)

AMALAN is an, essentially, linguistic body responsible for the standardization and development of each national language. There is a linguistic unit for each national language of Mali.

Materials and resources used

Linguistic corporate : Texts written in each of the third languages and French, extracted from the Malian Mining Code and from the story "Niamoye" with parallel translations in 12 of the 13 national languages of Mali and French.

4. Presentation and analysis of results

Automatic metrics, BLUE and ChrF++, could be applied to these texts and proven widely meaningful for quick, preliminary evaluation, particularly for comparing supported languages with significant resources. However, human evaluation, on both the materials with reference translations and on other collected text samples, was used to judge fluency, fidelity to meeting and cultural appreciation. This type of evaluation provided the only meaningful feedback for the most resource-poor languages.

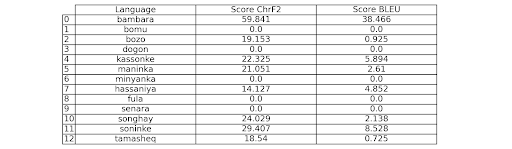

Table 1: Claude

Test 1: Text by Niamoye

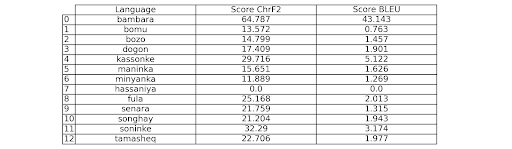

Table 2: ChrF2 and BLEU scores when translating from French into each of the languages of Mali

Box 2: Text of Mali

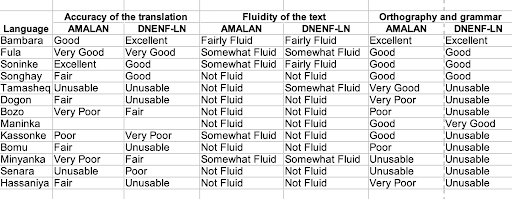

Table 3: Evaluation by experts of AMALAN and the DNENF-LN

Text of Niamoye

4 languages out of the 13 were considered good by human assessors, namely Bambara, Fula, Soninke and Songhay

General Summary:

The analysis seems to assess the quality of translations of texts from French into the third Malian languages, taking into account fidelity to the source text, fluidity, consistency and linguistic quality.

The reference texts were created and validated for correctness by human experts in French and all Malian languages, with the exception of Hassaniya Arabic for Niamoye. The French text was translated by Claude AI into each Malian language and ChrF2 and BLEU scores were measured against the reference translations as presented in Tables 1 and 2.

Bambara has, by a considerable margin, the highest quantitative scores (64.8, 59.8 for ChrF2 and 43.1, 38.5 for BLUE for the two texts). Kassonke, and Soninke show moderately raised scores, suggesting that Claude AI also has significant abilities in these languages.

The human evaluation of translations carried out out by the DNENF-LN and the AMALAN supported some of the results identified by automated scoring and contrasted other results. Bambara and Soninke had highly positive evaluations by both human and automated scoring, while Kassonke Fula, which from independently rated scoring seen not to be meantfully translated by Claude AI, was appreciated by the human assessors as approximately equal to Bambara and Soninke. Songhay was also evaluated much more highly by humans than its automated scores would have suggested.

There is a correspondence in the results to the quantity of digital resources available in each language. Our team has created, worked with, or is aware of significant resources in Bambara, Soninke, Fula, and Songhay, all languages for which human assessors get good marks to the automated translations. A surprise in the results is the ability of Claude AI to produce any meaningful result in languages for which there are virtually no digital resources. The relative high automated evaluation scores for Kassonke and low scores in human evaluation of meaningfulness of the text suggest a considerable ability to mimic language from very small samples without currently being able to produce intelligible text. The contrary lesson might be driven from the results with Fula, where poor self-reported scores suggest that Claude AI was able to translate the essence of the ideas covered by the text, causing it to forgo mimicry, producing a meaningful text with the vocabulary at its disposal.

Conclusion

Claude This natural capacity demonstrates the interest in putting further by enriching the corporate accessible to LLMs in these languages.

Our investigation demonstrates that automated metrics may fail catastrophically, in some cases, in the assessment of translation quality for extremely low resourced languages. They are more binding for languages with moderate resources, but it may be difficult to assess translation performance without human assessment. Humans provides more qualified analysis of qualitative issues, specifically in terms of grammaticality and semantic fidelity that are required to assess the ability of the model in the target language.

Cross-linguistic transfer and multilingual pre-training techniques have shown promising results, suggesting that the bar for the quantity of resources needed to obtain useful results has fallen significantly below what was assumed to be necessary earlier. It appears that relatively robust results for Bambara can be obtained despite its very small quantity of online data and curated training data. While the bar is not sufficiently low to enable translation to languages with the most limited resources, our results suggest that the effort to assemble even modest levels of resources for these languages may prove fruitful. It may also give additional incentive to work on further enhancing interlinguistic capabilities of LLMs so that the resources available and the capabilities of the LLMs may advance the state-of-art for low resource languages more quickly. We may not have completed the limits of interlingual abilities of language models.

Bibliographic references

- Adelani, D.I., et al. (2022). A Few Thousand Translations Go a Long Way! Leveraging Pre-trained Models for African News Translation. Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, 2812-2823.

- Anastasopoulos, A., et al. (2020). ICTO-19: The Translation Initiative for VOCID-19. arXiv preprint arXiv:2010.04650.

- Bird, S. (2020). Decolonising Speech and Language Technology. Proceedings of the 28th International Conference on Computational Linguistics, 3504-3519.

- Conneau, A., et al. (2020). Unsupervised Cross-lingual Representation Learning at Scale. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 8440-8451.

- Hammarström, H., et al. (2017). Glottolog 3.0. Max Planck Institute for the Science of Human History.

- Joshi, P., et al. (2020). The State and Fate of Linguistic Diversity and Inclusion in the NLP World. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 6282-6293.

- Nekoto, W., et al. (2020). Participatory Research for Low-Resourced Machine Translation: A Case Study in African Languages. Findings of the Association for Computational Linguistics: EMNLP 2020, 2144-2160.

- Worse, T., et al. (2019). How Multilingual is Multilingual BERT? Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 4996-5001.

- Ruder, S. (2021). Why You Should Do NLP Beyond English. Blog post, https://ruder.io.

- Smith, T., et al. (2022). Performance of Advanced Large Language Models in Low-Resource Contexts: A Survey and Benchmark Analysis. Journal of Artificial Intelligence Research, 74, 89-102.

- Tapo, A.A., et al. (2020), Neural machine translation for extremely low-resource African languages: A box study on bambara. arXiv preprint arXiv:2011.05284.

- Artetxe, M., et al. (2019). Unsupervised Translation of Dialects and Related Languages by Lexicon Induction. North American Chapter of the Association for Computational Linguistics, 75-84.

- Guzmán, F., et al. (2019). The FLORES Evaluation Datasets for Low-Resource Machine Translation: Nepali-English and Sinhala-English. Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing, 6100-6113.

- Orife, I., et al. (2020). Masakhane: Towards Open and Responsible NLP for African Languages. Findings of the Association for Computational Linguistics: EMNLP 2020, 3144-3157.

- Ruder, S., et al. (2021). The Current State of Cross-lingual NLP. Transactions of the Association for Computational Linguistics, 9, 127-145.

- Enis, A., & Hopkins, J. (2024). Performance of Claude 3 Opus in low-resource language translation. Journal of Machine Translation Research.

- Sunyu Transfer (2024). evaluating Claude Natural Language Processing Insights.

- FLORES-200 Dataset. Benchmark for valuating low-resource language translation models.